Automated security kiosk could alleviate travel, border woes

Posted by Greg Katski

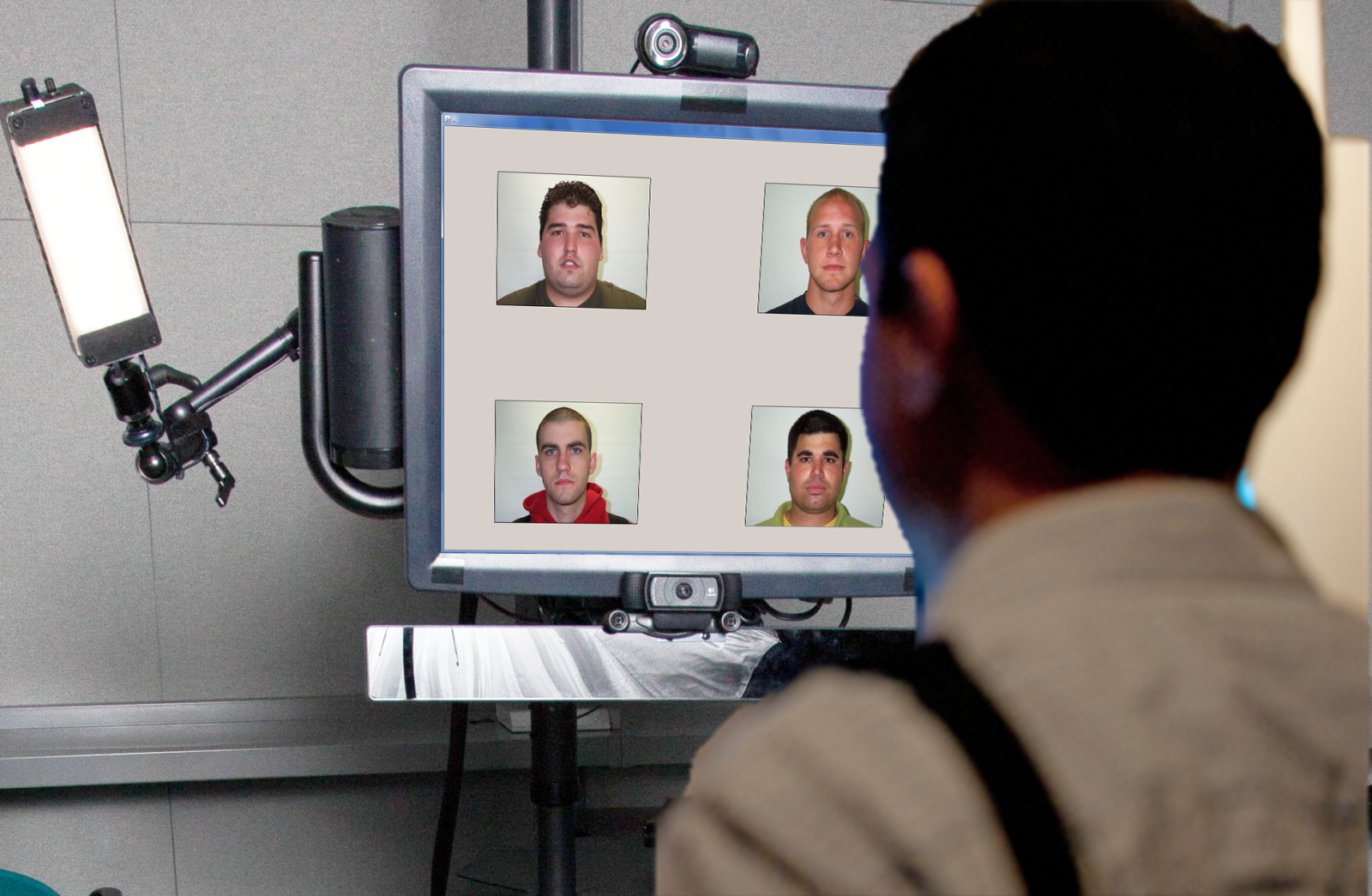

An early prototype of the automated screening kiosk.

A mock-up of Dr. Nathan Twyman’s automated screening kiosk. Illustration by Mark Williams/Missouri S&T

An automated screening kiosk developed by a Missouri University of Science and Technology researcher could alleviate concerns about safety and wait time at U.S. airports and border crossings.

Dr. Nathan Twyman, assistant professor of business and information technology at Missouri S&T, has been developing a next-generation automated screening kiosk since he was a Ph.D. student at the University of Arizona. His screening kiosk uses an algorithm of “yes” or “no” questions delivered by a computer-generated avatar to quickly and efficiently assess the potential threats passengers may pose to others.

Twyman says the screening can be completed in less than four minutes with a 90 percent success rate.

The assessment’s speed and success rate are better than security at most airports in the United States, Twyman says. In fact, an internal investigation by the Transportation Security Administration in 2015 found that TSA agents failed to identify explosives and banned weapons 95 percent of the time. The investigation was carried out by undercover Homeland Security “Red Teams” that posed as passengers. In 67 of 70 instances, the investigators were able to get through security with mock explosives or banned weapons without being detected.

Typically, when travelers enter the United States on an international flight, they must go through a U.S. Customs and Border Protection (CBP) area where a CBP officer screens them. Depending on their answers, the officer might ask them one question, or a handful of questions. The process can be alarmingly subjective, Twyman says.

Twyman’s automated screening kiosk eliminates this subjectivity.

“We don’t want random,” says Twyman. “(The kiosk) is not profiling. It’s not pulling you aside because of your religion. And on top of that, it’s not going to get tired. It’s not going to make mistakes because it’s ready to go on a break. It’s a lot better than a human solution. Humans are really bad at risk assessment, as it turns out.”

Twyman’s screening kiosk asks a series of basic “yes” or “no” questions and measures the user’s responses through a variety of techniques. An infrared camera scans a subject’s eye movement and pupil dilation; a video camera captures natural reactions to feeling threatened, such as body and facial rigidity; and a microphone records vocal data, listening for changes in pitch that accompany uncertainty.

“This (screening kiosk) measures various psychophysiological responses and tries to make some sort of a risk assessment outcome,” says Twyman. “It’s an automated risk assessment, instead of a seat-of-your-pants risk assessment. There’s a controlled, structured process for it. It gives (CBP officers) some more hope that they can pick out who they need to pick out instead of patting down grandma at the airport.”

An early prototype of the automated screening kiosk.

When users approach the kiosk, they are greeted by a computer-generated image of a young man with black hair. The talking head gets right to the point with questions such as, “Have you ever been arrested?” and “Have you moved in the past five years?” When the avatar is done with its questions, the machine reports its findings question-by-question to the CBP officers or border patrol agents on duty. Risk is determined on a color-coded basis, with green being of low risk, yellow being of moderate risk and red being of high risk.

Twyman prefers this straightforward approach to asking questions to other automated security systems, which he says can be more conversational.

“I made the kiosk as scientifically controlled as possible,” he says. “(The avatar) doesn’t care if it’s your friend or not. It isn’t interested in asking you how your day went and trying to pretend that it’s human. This doesn’t pretend that it’s human. It follows the most scientifically accepted approach to human risk assessment. It gives you a really solid baseline to work with. When someone’s telling you the truth, compare that to when they’re lying. Without that baseline, it’s hard to really gauge people. For the kiosk, that baseline is very important.”

Twyman has conducted field studies at a border crossing in Nogales, Arizona, a field experiment at a TSA Systems Integration Facility in Washington, D.C., and a field experiment in Apeldoorn, Netherlands, using European Union border guards. Now, he’s hoping to implement the kiosks in real-world situations.

“We’ve been all over the world doing these various tests,” he says. “We’ve got good data, but we need to actually be able to implement it in a real-world scenario. It’s hard to sell it as the perfect solution if you don’t have the real-world data.”

Twyman’s research group is in talks with the government of Singapore to implement the kiosks at the border crossing between the relatively large country of Malaysia and the geographically small city-state of Singapore situated on Malaysia’s southeastern-most corner.

“We’re trying to get it out in the field, such as the Singapore-Malaysia border. Places we can essentially do a test evaluation at a real border,” says Twyman. “For them, speed is crucial.”

According to the Malaysian government’s 2015 immigration data, more than 250,000 Malaysians cross the country’s border into Singapore twice daily for work.

“That’s a lot of people to screen,” says Twyman. “They need to be able to pick out the needle in the haystack without talking to every single individual. A bank of kiosks that can process a number of people all at once and set aside only a few people for more in-depth screening could be a really big benefit to them.”

Twyman has received numerous grants from the National Science Foundation for his research. His most recent journal article associated with the research, “Robustness of Multiple Indicators in Automated Screening Systems for Deception Detection,” was published in the April 2016 issue of the Journal of Management Information Systems.

I take it that as a non-hearing person I’ll just need to skip the kiosks. I hope there are signs to where to go.

Hi David,

I am the author of the article, and just talked to Dr. Twyman. He says his research team is developing a text-only version of the kiosk, so the hearing impaired could use that option. Please let us know if you have any more questions or concerns.

Thanks!

-Greg Katski

Your article is so useful for us,thanks for sharing. Good stuff!